Text indexing in Python - constructing FSA from unsorted input

In the last post we discussed finite-state automata in general and at the end the algorithm for constructing such automata was presented. One caveat the previous algorithm had was that its input had to be sorted. In this short read I will present the algorithm which handles any input, regardless of a sort order.

First let’s take a look at what is the actual problem that arises when the data is not sorted. Here’s the example from previous post:

words = ['wisp', 'wasp']

s, reg = minimal_automaton_from_words(words)

Now let’s add the “wisper” word at the end. Mind this will make the input not sorted:

words = ['wisp', 'wasp', 'wisper']

s, reg = minimal_automaton_from_words(words)

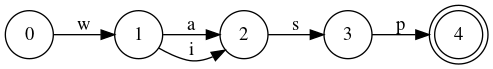

Can you spot what’s wrong? This automaton encodes one additional word: “wasper”.

The proper automaton which recognizes these words should be this:

words = sorted(['wisp', 'wasp', 'wisper'])

s, reg = minimal_automaton_from_words(words)

Algorithm description

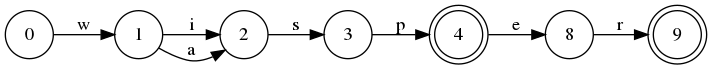

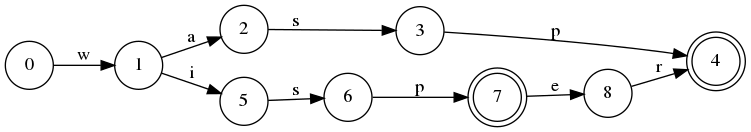

So how do we get from the ['wisp', 'wasp'] (A) automaton to the correct ['wisp', 'wasp', 'wisper'] (B) automaton? It doesn’t seem like we can just append some states in a correct place and minimize them… The problem is that in the automaton with “wisper” present the representations of “wisp” and “wasp” cannot share the “sp” suffix anymore. So before adding the “wisper” word to the automaton we have to reverse the minimization.

We don’t have to reverse the minimization of the whole automaton, we just need to do that on the part that shares the common prefix with the word we’re adding. Let’s take a look at the automaton A. The common prefix with the “wisper” word are states s0 to s4 and on this path the fist state that is a result of minimization is the s2 state. We will call this kind of state a confluence state. In essence all states which have more than on predecessor will be confluence states.

So now let’s get back to reversing the minimization. We need to clone states s2, s3 and s4. We first clone s4 and create what will become s7 in the new automaton. Now we append the “er” suffix to the s7 and minimize it. The minimization may create a new confluence state (example in the paper [1]) so we need to check for the first confluent state again. Now we can clone all the way to the first confluence state. In this case nothing has changed so the first confluence state is still s2. We clone s2 and s3 creating respectively states s5 and s6. Then we make the “i” transition from s1 point to the clone of s2 which is s5.

The last thing we have to do is update the registry entries for all the not yet processed states of the prefix (s0, s1), as their languages might’ve changed.

The code

So now after we have the rough idea let’s take a look at the code and explain it a bit. As always the devil is in the details.

Managing the confluence states

The important part of the algorithm is keeping track of the confluence states so that we know which states we have to clone.

First let’s take a look at a slightly modified State code. It was explained before so now it’s only pasted for clarity:

class State(object):

def __init__(self, label):

self.label = label

self.transitions = OrderedDict()

self.final = False

def next_state(self, c):

return self.transitions.get(c, None)

def accepts(self, w):

s = self

for c in w:

s = s.next_state(c)

if s is None:

return False

return s.final

def add_transition(self, c, state):

self.transitions[c] = state

def remove_transition(self, c):

return self.transitions.pop(c)

def clone(self, label):

s = self.new(label)

s.transitions = OrderedDict(self.transitions)

s.final = self.final

return s

def add_transition_to_a_new_state(self, c, label):

s = self.new(label)

self.add_transition(c, s)

return s

def set_final(self, final):

self.final = final

def last_transition(self):

k = next(reversed(self.transitions), None)

if k is not None:

return k, self.transitions[k]

else:

return None

def add_suffix(self, word, labels):

state = self

for c in word:

state = state.add_transition_to_a_new_state(c, next(labels))

state.final = True

@classmethod

def new(cls, label):

return cls(label)

We need to extend the definition of state a bit. The ConfluenceAwareState is a subclass of State which keeps track of the number of predecessors to each state using the confluence_counter attribute.

class ConfluenceAwareState(State):

def __init__(self, label):

super().__init__(label)

self.confluence_counter = 0

def add_transition(self, c, state):

if c in self.transitions:

if self.transitions[c] != state:

self.transitions[c].confluence_counter -= 1

state.confluence_counter += 1

self.transitions[c] = state

def clone(self, label):

for s in self.transitions.values():

s.confluence_counter += 1

return super().clone(label)

def add_suffix(self, word, labels):

if self.final:

self.confluence_counter += 1

super().add_suffix(word, labels)

When adding a new transition we first need to check if we don’t overwrite the existing transition. If so we need to decrease the confluence_counter of the old state. In any case we need to increase the counter of newly added state.

When we clone we copy all the transitions too. So we need to increase confluence counters of all the states to which we can transition to from the current state.

And last bit: we need to increase the confluence_counter if we add a suffix to the state which is final. If you think about it final flag is like branching out to a virtual final state.

The states Register

The second important bit is the states register, that keeps the track of the states we can use for minimization.

The previous algorithm had one great property, once the states were minimized they didn’t change anymore so most states of the automaton currently built were immutable. In the case of unsorted input any of the states may be subject to change. Because of that the register can have an outdated information and we need to deal with that.

I opted for lazy removing of the outdated states instead of diligently tracking if they’ve changed. When the state is requested I check if it’s current key is the same as the key it’s stored under. If it’s not I delete it.

class CheckRegister(Register):

def __init__(self, key):

super().__init__(key)

def get(self, state):

k = self.key(state)

v = self.states.get(k, None)

if v is not None:

if self.key(v) == k:

return v

else:

del self.states[k]

return None

The algorithm

So now it’s the time for the algorithm. The most important part here is the add_out_of_order_word_to_automaton method, which is quite big, so I added the comments inside. The method minimal_automaton_from_unsorted_words glues everything together and the rest of the methods are hopefully explained by their names. This is quite a lot to dig in so take your time:

def add_out_of_order_word_to_automaton(word, start_state, register, labels):

pref_last_state, pref_idx = common_prefix(start_state, word)

current_suffix = word[pref_idx:]

if current_suffix or not pref_last_state.final:

# we proceed only if current_suffix is not empty or

# last_state is non-final

bef_conf_state, bef_conf_idx = first_before_confluent_state(

start_state,

word[:pref_idx]

)

if bef_conf_state is not None:

# if there is a confluence state downstream clone

pref_last_state = pref_last_state.clone(next(labels))

# now add suffix and replace/register recursively

pref_last_state.add_suffix(current_suffix, labels)

if current_suffix:

replace_or_register_on_path(

pref_last_state,

register,

current_suffix

)

if bef_conf_state is not None:

# check again for confluent states as some might have been created

# by minimizing suffix

bef_conf_state, bef_conf_idx = first_before_confluent_state(

start_state,

word[:bef_conf_idx + 1] # +1 bc if we don't find before we'll find previous one

)

pref_states_to_clone = get_word_path(bef_conf_state, word[bef_conf_idx:pref_idx-1])

# pref_states_to_clone contains all the states we need to clone, with the first

# confluent state at the beggining

for word_idx in range(pref_idx-1, bef_conf_idx - 1, -1):

path_idx = word_idx - bef_conf_idx

if path_idx > 0:

# we clone all the states upstream from the confluent state

current_state = pref_states_to_clone[path_idx].clone(next(labels))

else:

# we keep the confluent state as it is

current_state = pref_states_to_clone[path_idx]

current_state.add_transition(word[word_idx], pref_last_state)

replace_or_register_one_state(current_state, register, pref_last_state, word[word_idx])

pref_last_state = current_state

else:

bef_conf_idx = pref_idx

upstream_states_to_register = get_word_path(start_state, word[:bef_conf_idx-1])

# here are all the states upstream of confluent state which we need to

# possibly add to the register

start_idx = len(upstream_states_to_register) -1

for idx in range(start_idx, -1, -1):

current_state = upstream_states_to_register[idx]

is_modified = replace_or_register_one_state(

current_state,

register,

pref_last_state,

word[idx]

)

if not is_modified:

# if we didn't replace the state we won't have to replace any

# upstream states so finish here

break

pref_last_state = current_state

def first_before_confluent_state(state, word):

current_state = state

for i, c in enumerate(word):

s = current_state.next_state(c)

if s is not None:

if s.confluence_counter > 1:

return current_state, i

current_state = s

else:

return None, None

return None, None

def get_word_path(start_state, word):

path = [start_state]

current_state = start_state

for c in word:

current_state = current_state.next_state(c)

assert current_state is not None

path.append(current_state)

return path

def replace_or_register_one_state(state, register, last_child, last_c):

eq_state = register.get(last_child)

if eq_state is None:

register.put(last_child)

return False

elif eq_state != last_child:

state.add_transition(last_c, eq_state)

return True

else:

return False

def replace_or_register_on_path(state, register, word):

last_c = word[0]

last_child = state.next_state(last_c)

if last_child.transitions and word[1:]:

replace_or_register_on_path(last_child, register, word[1:])

replace_or_register_one_state(state, register, last_child, last_c)

def get_word_path(start_state, word):

path = [start_state]

current_state = start_state

for c in word:

current_state = current_state.next_state(c)

assert current_state is not None

path.append(current_state)

return path

def minimal_automaton_from_unsorted_words(words):

register = CheckRegister(key_obj)

labels = inf_labels()

start_state = ConfluenceAwareState(next(labels))

for word in words:

add_out_of_order_word_to_automaton(word, start_state, register, labels)

return start_state, register

Ending notes

As with the previous post I have to mention this is just a toy implementation. One of the apparent problems with it (apart from the ones mentioned in the previous post) is that lazy removing of the states from the register may make it blow up in the memory. This and other problems may be addressed in the future posts so stay tuned and check for the updates.